The Infrastructure Shift in Capital Markets

How tokenization is redesigning lifecycle architecture, settlement dynamics, and capital efficiency

From Theory to Market Reality

It has been some time. I am now returning with a more focused perspective on the institutional side of digital assets, tokenization, crypto treasury strategies, and the underlying dynamics shaping capital market infrastructure beyond the headlines.

Let’s jump right into it. ;-)

…

Over the past years, I have spent considerable time working on the theoretical foundations of tokenized markets and their architectural design. What is striking today is not the theory itself, but how closely current market developments are beginning to reflect these earlier concepts.

This shift is no longer abstract. It is visible in both the data and the behavior of market infrastructure.

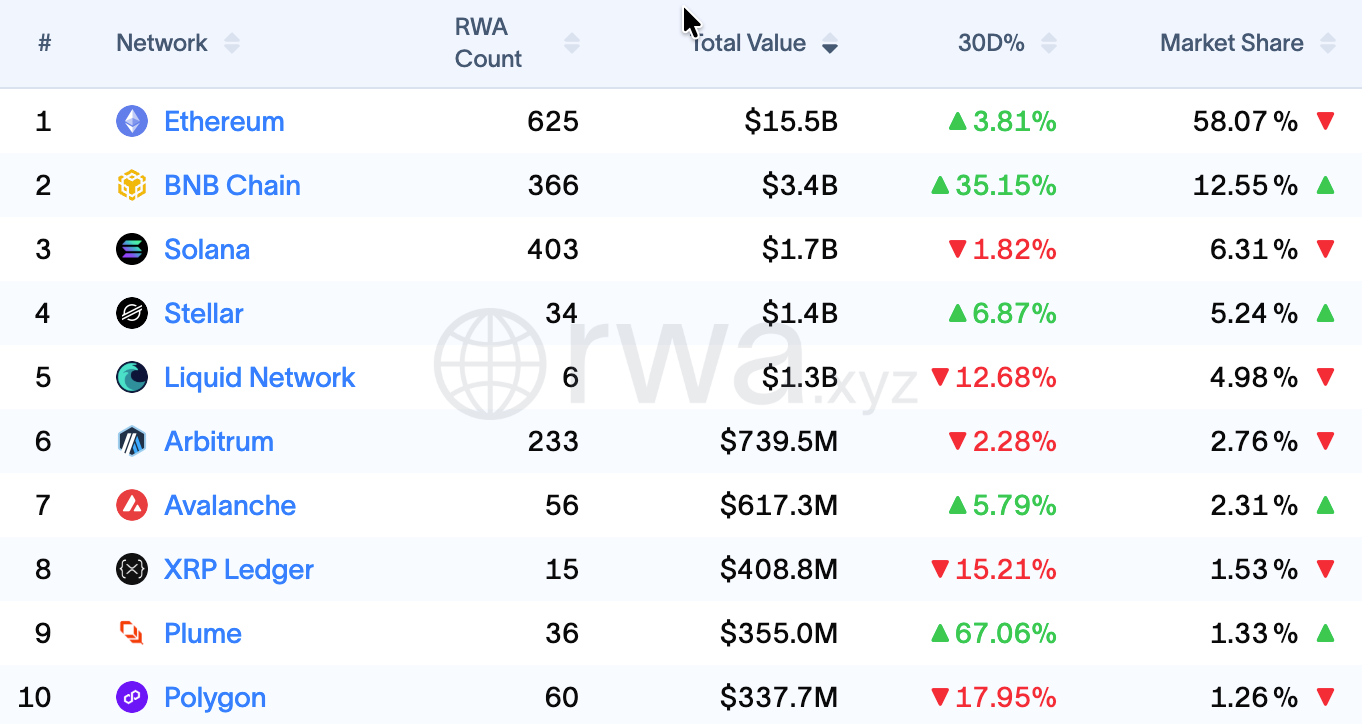

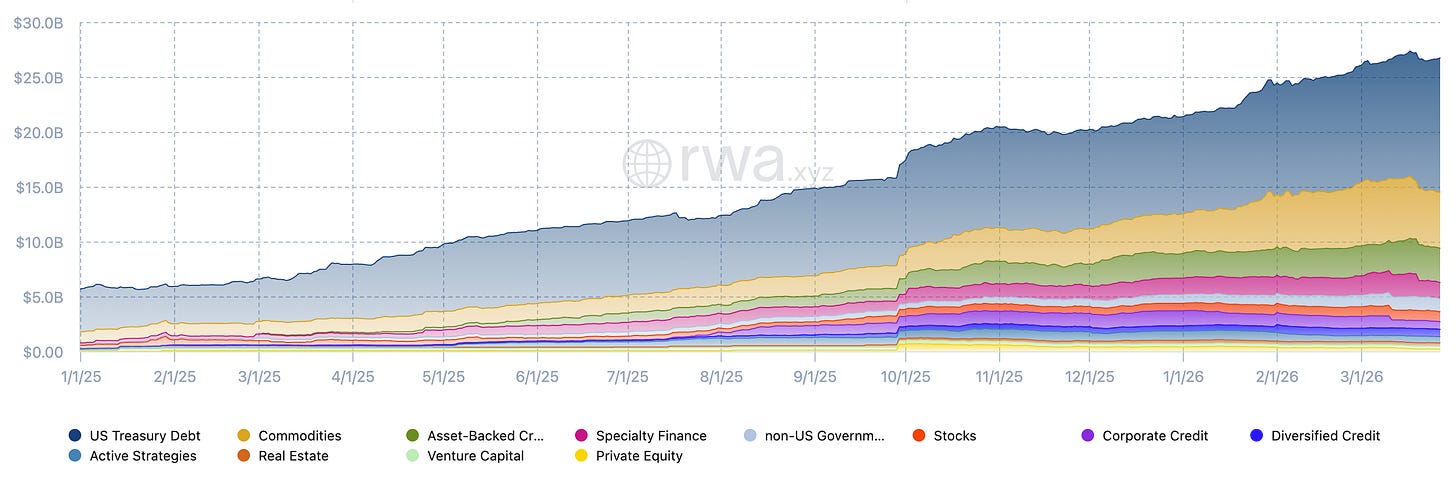

Tokenized real-world assets have grown more than fivefold, from roughly USD 5 billion in early 2025 to approximately USD 26.7 billion, with tokenized U.S. Treasuries alone accounting for around USD 12.2 billion (see Figure 1), according to the analytics platform RWA.xyz. What is equally important, but often overlooked, is the parallel development of the settlement layer.

Stablecoins have evolved into the dominant on-chain settlement and liquidity infrastructure, with total value approaching USD 300 billion. They enable real-time transfer, trading, and collateral movement across tokenized environments, effectively acting as the transactional backbone of emerging digital market structures.

At the same time, core market infrastructure providers are shifting their approach. What was previously treated as experimentation is increasingly moving into early-stage adoption. Nasdaq is developing tokenized equity issuance models, NYSE is exploring extended trading environments with integrated settlement logic, DTCC is advancing tokenized post-trade and collateral infrastructure, and Broadridge is already operating DLT-based repo platforms at scale.

Taken together, these developments point to a clear convergence. The theoretical foundations of tokenized markets are now materializing in capital flows, infrastructure design, and the specific use cases that are scaling first. This is where earlier research on tokenized security design connects directly to the structural shift currently unfolding in practice.

We are no longer approaching an inflection point. We are already moving through it.

The relevant question is therefore no longer whether the technology works, but how it reshapes the architecture of capital markets and which parts of the value chain are being transformed first.

In the following, I take an infrastructure perspective. The core argument is straightforward. Tokenization should not be understood primarily as asset digitization, but as a structural redesign of capital market infrastructure, with direct implications for market structure, capital efficiency, and institutional adoption.

From Representation to Infrastructure

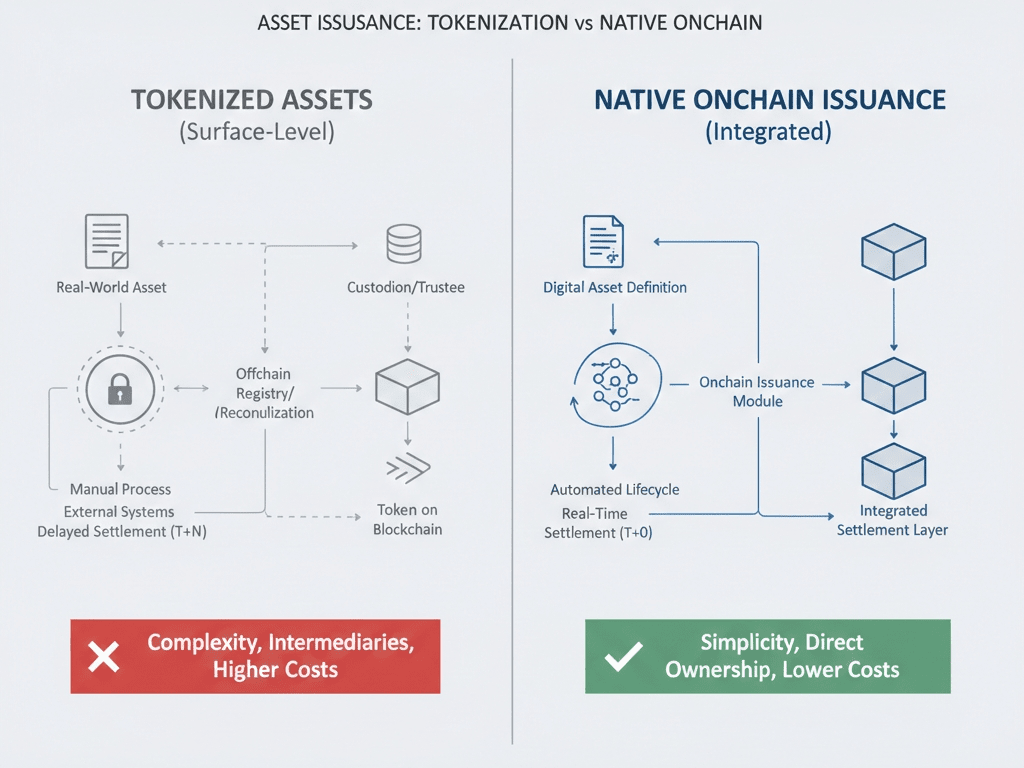

Tokenization has long been framed as a technological overlay on existing financial assets. The dominant narrative suggested that securities could be digitized, transferred more efficiently, and made more accessible, while the underlying market structure remained largely unchanged (Boston Consulting Group, 2022).

In this framing, a bond, a share, or a fund unit is expressed in a new technical format, while the core processes of issuance, transfer, settlement, custody, and compliance remain largely intact. Efficiency gains are expected at the margins, but the overall system architecture is left untouched.

This perspective is increasingly insufficient.

What is now emerging across capital markets is not simply a new representation of assets, but a gradual reconfiguration of the infrastructure through which those assets are issued, transferred, settled, and reused. The distinction is subtle, but fundamental.

The question is no longer whether assets can be tokenized, but what changes once the lifecycle of financial instruments is no longer fragmented across multiple intermediaries and systems, but embedded within a more integrated and programmable environment.

This shift has been anticipated in earlier research on tokenized market design. In this context, studies have shown that efficiency gains do not arise from digitizing assets in isolation, but from embedding governance, compliance, and lifecycle processes directly into system architecture (Guggenberger et al., 2023; World Economic Forum, 2025). From a transaction cost perspective, these gains are driven by the reduction of coordination complexity, uncertainty, and fragmentation at the infrastructure level (Cisar et al., 2025).

Tokenization, in this sense, is not a feature. It is an architectural shift.

Structural Fragmentation as the Root Cause

To understand why this architectural shift matters, it is necessary to revisit how capital markets operate today.

Modern market structure is built on layered specialization. Issuance, trading, clearing, settlement, custody, registry, and compliance are distributed across different institutions and technical systems. Each layer is locally optimized, but the system as a whole remains fragmented.

This fragmentation is not accidental. It reflects decades of legal, institutional, and operational specialization. However, it also introduces structural inefficiencies that become increasingly visible once a more integrated alternative becomes technically feasible.

The following sequence outlines how structural fragmentation in today’s market infrastructure translates into operational inefficiencies across the transaction lifecycle:

Transactions move sequentially across intermediaries.

Records must be reconciled across systems that are not natively aligned.

Legal ownership and operational state can diverge temporarily until settlement finality is reached.

Settlement cycles delay capital availability (typically T+2 in traditional markets).

Collateral remains locked during the settlement window, limiting its immediate reuse.

Liquidity buffers are required to absorb timing mismatches, creating opportunity costs.

Counterparty risk persists until settlement finality.

Operational risk emerges from coordination, reconciliation, and system dependencies.

These frictions are not marginal. They are embedded in the architecture itself.

This is precisely why current tokenization efforts by market infrastructures focus on settlement, collateral, and ownership design rather than on token issuance alone. The primary objective is not to digitize assets, but to remove coordination layers that constrain capital movement.

Earlier research on tokenized markets addressed exactly this problem. The central insight is consistent. Efficiency gains emerge when surrounding processes are integrated into a coherent system architecture rather than replicated across fragmented layers.

Tokenization becomes economically meaningful only when it addresses structural fragmentation at the infrastructure level.

Integrated Lifecycle Design

The key innovation of tokenization is therefore not the token itself, but the ability to integrate previously separated functions into a unified system architecture.

This perspective is increasingly reflected in recent institutional research, which shifts the focus away from token-level efficiency toward infrastructure transformation and integrated lifecycle design as the primary source of value creation (World Economic Forum, 2025).

Issuance, trading, settlement, and registry no longer need to be treated as strictly sequential stages. Instead, they can be coordinated within a shared infrastructure where data, ownership, and compliance logic are aligned by design. This is the difference between tokenizing an asset and redesigning the lifecycle around it.

A critical distinction must be made between tokenized representations of existing off-chain assets and natively issued DLT-based instruments. While the former often replicate existing structures with incremental efficiency gains, the latter enable deeper integration of lifecycle, settlement, and ownership logic within a single infrastructure.

Several design principles underpin this shift:

Lifecycle integration ensures that assets move through their full lifecycle within a connected environment rather than across disconnected layers.

Programmable compliance embeds regulatory requirements directly into asset logic, reducing reliance on ex-post verification and reconciliation.

Standardization enables interoperability across participants, while permissioned access layers ensure regulatory compatibility.

At the same time, these benefits depend heavily on underlying design choices. Tokenized markets can be implemented on public-permissionless, public-permissioned, or private-permissioned infrastructures, each with distinct trade-offs in transparency, control, scalability, privacy, and regulatory alignment (World Economic Forum, 2025).

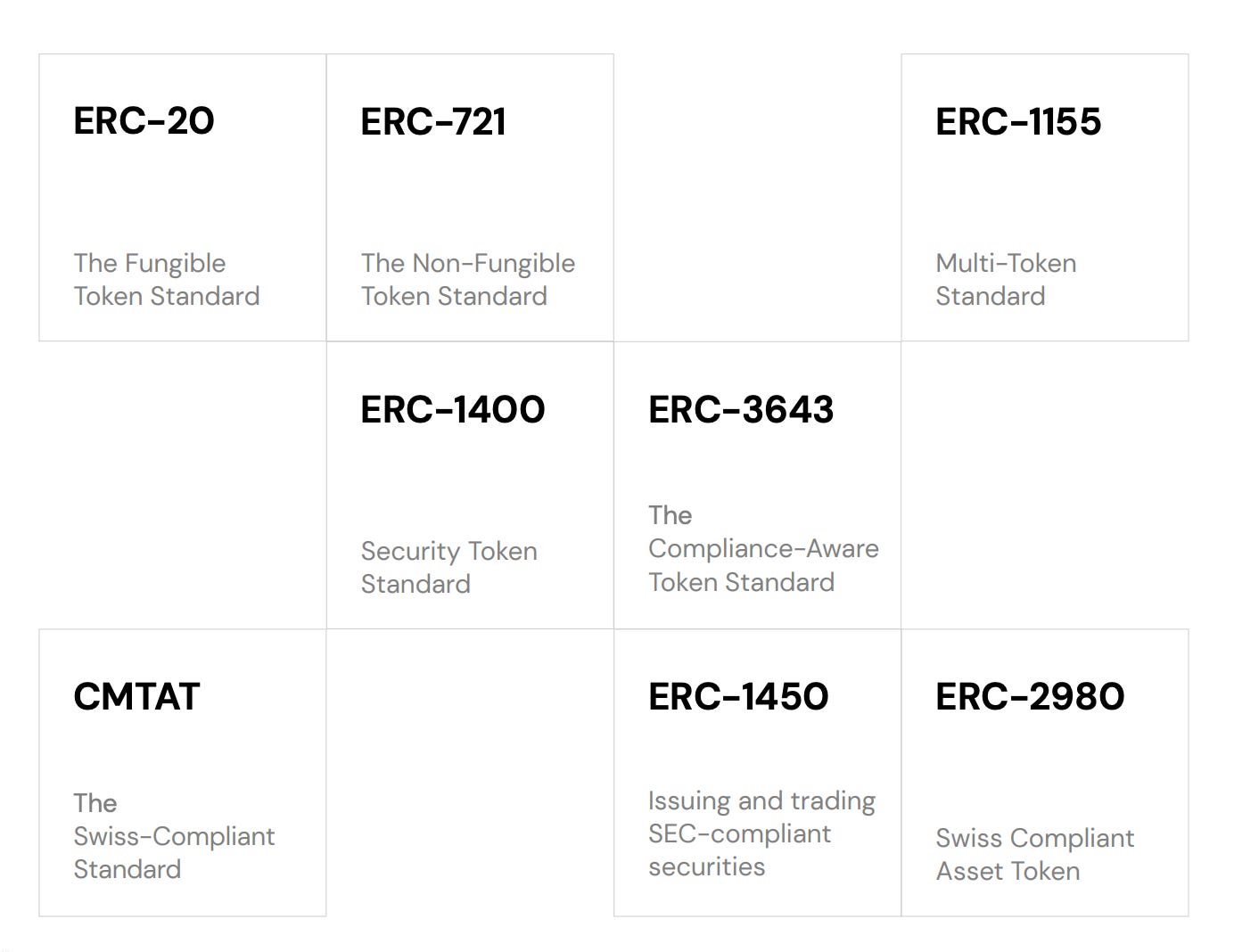

Token standards such as ERC-20 and ERC-1400 form the foundational layer for fungible and permissioned representations. However, institutional-grade implementations require integration beyond the token itself, including regulated registers, identity frameworks, and supervisory oversight.

At the same time, compliant market setups require integration beyond the token itself. In jurisdictions such as Germany, this requires compliance with the Electronic Securities Act (eWpG), including the use of crypto securities registers operated by regulated entities, alongside KYC-based whitelisting requirements under the German Anti-Money Laundering Act (GwG), and regulated register operators under German Federal Financial Supervisory Authority (BaFin).

These elements illustrate that tokenized infrastructure is not only a technical construct, but a legally embedded system architecture.

This leads to a fundamental conclusion: Tokenization does not eliminate intermediaries. It restructures them.

Custodians, registrars, trading venues, and infrastructure providers remain central. Their role shifts from coordinating fragmented processes across organizational and technical boundaries to operating within a more integrated system.

This shift does not represent disintermediation, but a reconfiguration of intermediation at the infrastructure level.

Implications for Market Structure

Collateral Mobility

The most important implication of tokenization does not lie in faster settlement alone, but in what faster and more tightly integrated settlement ultimately enables.

In traditional market structures, collateral is operationally constrained by design. Settlement cycles, fragmented custody arrangements, and reconciliation processes delay finality and limit how quickly assets can be reused. This dynamic is well documented in post-trade literature, where settlement latency and structural fragmentation are consistently identified as key drivers of capital inefficiency (DTCC, 2025).

Tokenized infrastructure changes this relationship at a structural level. By aligning transfer, settlement, and registry updates within a shared system environment, delivery-versus-payment can, under appropriate conditions, be executed atomically. Ownership transfer and settlement finality are no longer separated in time, but converge into a single coordinated step. The immediate effect is a reduction in counterparty exposure. The more profound effect is the ability to redeploy capital without delay (DTCC, 2025).

What emerges is not just speed, but a different capital dynamic. Collateral is no longer passively held between processes, but becomes actively reusable within them.

For asset managers and treasury functions, this translates into tangible flexibility. Capital can be allocated more dynamically across tokenized Treasuries, money market instruments, and stablecoin-based liquidity pools, which already represent close to USD 300 billion in on-chain liquidity (RWA.xyz, 2026). What was previously constrained by operational friction becomes increasingly a function of allocation strategy.

This effect compounds in collateral-intensive markets such as repo and derivatives. Broadridge’s DLT-based repo platform, processing more than USD 1 trillion in monthly volume, illustrates how even incremental improvements in collateral mobility can translate into material balance sheet and liquidity effects at scale (Broadridge, 2026).

Market Participant Impact

These structural changes propagate across the value chain, but they do not affect all participants in the same way. The impact depends on where each actor sits within the market architecture.

Trading venues are moving toward tighter integration between execution and settlement, enabling shorter settlement cycles and, in some cases, more continuous market structures (Nasdaq, 2026; ICE, 2026).

Custodians and CSD-like entities are evolving toward digital asset servicing, combining wallet infrastructure, access control, and on-chain entitlement management within their core offering (DTCC, 2025).

Broker-dealers and market makers retain their central role in liquidity provision, but operate on infrastructure that reduces settlement friction and improves capital efficiency.

Asset managers, in turn, gain increased flexibility in liquidity and collateral management, particularly in fixed income and treasury strategies, where tokenized instruments and stablecoins are already being used in operational contexts.

Yet the more important observation lies beneath these role-specific changes.

Core functions such as issuance, trading, settlement, custody, and asset servicing are not disappearing, but being reorganized. Central securities depositories, custodians, and transfer agents, for example, increasingly see elements of record-keeping and reconciliation embedded into shared ledgers. At the same time, their responsibilities around governance, compliance, and asset servicing remain intact and, in many cases, become more critical (World Economic Forum, 2025).

In parallel, new layers are emerging within the market structure itself. Digital asset exchanges, digital securities depositories, and token issuers are complemented by a growing ecosystem of infrastructure providers, including on-chain identity solutions, interoperability layers, node operators, key management providers, and smart contract auditors (World Economic Forum, 2025).

Taken together, this points to a clear structural outcome. Tokenization does not compress the value chain into a disintermediated end state. It reorganizes it around new technical, operational, and compliance functions.

Across the core participant groups, the shift is therefore primarily operational. What matters is no longer how assets are represented, but how efficiently they can be moved, reused, and integrated into broader financial workflows.

Regulation as Design Constraint

This reconfiguration of market structure does not occur in a regulatory vacuum. On the contrary, regulation is one of its primary design drivers.

In Europe, the regulatory framework is comparatively structured. The DLT Pilot Regime (EU Regulation 2022/858) explicitly enables integrated trading and settlement infrastructures, while MiCAR provides legal clarity for crypto-assets and service providers. Together, these frameworks establish a controlled environment in which new infrastructure models can be tested and scaled under regulatory supervision (European Union, 2022; ESMA, 2023).

In the United States, the picture is more fragmented, but evolving in a similar direction. Legislative initiatives such as the Clarity Act and the GENIUS Act, alongside evolving SEC positioning on tokenized securities, point toward a clearer segmentation of digital assets and infrastructure roles. At the same time, tokenized securities remain firmly within the scope of existing securities law, reinforcing a key principle. Tokenization changes infrastructure design, not legal classification.

Across jurisdictions, the conclusion is consistent. Tokenization does not bypass regulation. It is shaped by it. Viable market architectures emerge where regulatory clarity, infrastructure design, and institutional participation align.

Liquidity and Fragmentation

Despite these developments, liquidity remains the central unresolved constraint.

A common misconception is that tokenization itself creates liquid markets. In reality, liquidity is not a property of technology, but of participation, market design, and capital concentration. Secondary market depth depends on how effectively tokenized infrastructure connects to existing capital pools and institutional participants.

This becomes evident when viewed in context. Even after recent growth, the tokenized real-world asset market, at roughly USD 25 to 30 billion, remains marginal compared to global bond markets alone, which exceed USD 130 trillion (Research and Markets, 2026). Adoption is advancing, but liquidity remains concentrated in a limited number of use cases.

At the same time, fragmentation persists across multiple dimensions:

Assets are distributed across different blockchain networks.

Liquidity pools are fragmented across protocols and infrastructures.

Users and institutions operate in partially disconnected environments.

In addition, activity is concentrated in a small number of asset classes and platforms, with tokenized Treasuries and a limited set of protocols accounting for the majority of on-chain volume

Tokenized infrastructure does enable continuous, always-on trading. However, not all market functions can or should operate on a pure 24/7 basis. Asset servicing, risk management, and system maintenance introduce practical constraints, suggesting that hybrid models, combining extended trading windows with controlled operational cycles, are more realistic in regulated market environments.

Interoperability remains a key bottleneck in this context.

Emerging solutions, including cross-chain messaging protocols and interoperability layers such as LayerZero and comparable frameworks, aim to connect otherwise isolated systems. Yet these solutions are still maturing and have not reached the level of standardization required for institutional-scale liquidity.

The implication is therefore structural. Liquidity will not emerge in isolated tokenized environments, but where infrastructure, interoperability, and institutional capital converge.

Conclusion

Tokenization is often described as a technological shift. In practice, it represents a structural transformation of capital market infrastructure.

The most relevant developments are not occurring at the level of asset representation, but at the level of system architecture. Integrated lifecycle design, programmable compliance, and improved collateral mobility are redefining how capital moves through markets.

What is emerging is not a parallel system, but a new operational layer that is gradually being integrated into existing financial markets.

At the same time, this transition introduces new challenges, from cybersecurity risks and operational dependencies on smart contract systems to the need for robust regulatory oversight.

The competitive advantage will therefore not lie in adopting tokenization as a feature, but in understanding and implementing it as an infrastructure paradigm.

References: